This leading pan-African bank’s main modernization challenge was not scaling its cloud instance. The bank’s greater challenge was more operational: how to deliver trusted data faster across multiple African markets, while keeping critical banking systems running and governance intact.

A ten-minute delay can matter. It can affect fraud detection, customer engagement, regulatory reporting, or the ability of a business team to act on an opportunity while it is still current.

The institution was expanding its use of AWS for modern data ingestion, analytics, machine learning, and cloud-scale processing. But its existing banking estate still mattered: core transaction systems, on-premises databases, operational platforms, APIs, files, legacy applications, and market-specific systems continued to support daily banking.

The needed transformation, therefore, was not a simple migration. It was a redesign of how trusted, governed data could be delivered across a hybrid banking estate without forcing large-scale data movement or disrupting operational systems.

When Every Data Request Creates More Complexity

In a bank of this scale, data demand comes from every part of the business.

Fraud teams need near real-time access to operational data. Regulatory teams need consistent reporting across markets. Digital teams need governed data delivered over APIs. Analytics teams want to build models using AWS-native services. Business teams may need to validate external or vendor-provided data without waiting for IT to build another pipeline.

Handled separately, each request can become a new extract, copy, pipeline, authentication path, or custom interface, resulting in fragmented governance and increased operational complexity. Over time, that pattern slows modernization. Data copies multiply. Controls are repeated. Source-system changes require downstream coordination. New initiatives wait for access rather than act on insight.

The bank needed a different model: reusable, governed data services that could support reporting, applications, analytics, APIs, data marketplace users, and AI use cases.

A Scalable Cloud Platform With Governed Data Delivery

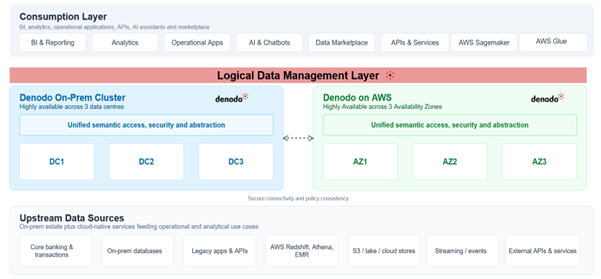

The bank settled on an architecture that combines AWS, as the scalable platform for modern data workloads, with Denodo, as the governed logical layer that connects those workloads to the broader banking estate.

AWS enables cloud-native ingestion, analytics, machine learning, and processing. Services such as Amazon S3, Amazon Redshift, Amazon Athena, Amazon EMR, AWS Glue, and Amazon SageMaker support the bank’s cloud data and AI initiatives.

The Denodo Platform sits between those services, the existing data estate, and the consumption layer, providing a unified access layer for governed data delivery across distributed environments. On premises, it runs as a highly available cluster across a number of data centers. In AWS, it is deployed across multiple availability zones. Secure connectivity links the two environments, enabling the bank to maintain policy consistency, runtime access controls, and governed data access across both.

Figure 1: Simplified hybrid data architecture of the banking institution connecting on-premises banking systems with AWS-native data and analytics services.

The architecture separates data consumption from data location, enabling teams to access live, governed data without needing to manage where the data physically resides. A dashboard, API, operational application, AI assistant, AWS Glue job, SageMaker workload, or Denodo Data Marketplace user can consume governed, logical views without needing to know where the underlying data is physically stored.

That is the last mile of data delivery: trusted data made available through the channels the business already uses, including analytics, applications, and AI workloads.

The Important Shift: From Data Pipelines to Data Products

The practical change is that data delivery becomes less dependent on custom engineering, duplicated pipelines, and point-to-point integrations for every request.

Denodo enables the bank to expose APIs, file shares, and other non-traditional sources as governed virtual tables. This reduces the need to build bespoke ingestion frameworks every time a new source is required. It also provides teams with a standard way to secure, mask, and control access to those sources through the data layer, helping governance policies remain consistent across distributed systems.

The bank is also building toward a governed data marketplace. Users can discover datasets, preview masked data, request access, and subscribe to data products. This matters because self-service in banking cannot mean uncontrolled access. It has to combine usability with policy enforcement, auditability, and regulatory control.

This is where AWS and Denodo reinforce each other. AWS gives teams the scale and services to build modern analytics and AI workloads. Denodo helps organizations access trusted enterprise data across hybrid environments without every team having to build its own pipelines, integrations, or routes back into the data center.

Changing the Economics of Modernization

The most visible impact of this transformation is not architectural. It shows up in delivery effort, migration agility, and downstream change.

As a result, the bank experienced the following outcomes:

- 70–90% reduction in integration effort

- 4PB migrated to AWS in 3 months with zero downtime

- 2M queries/month across 1,300+ users

- Significant cloud cost optimization (reduced data movement and duplication)

- Real-time data alerts enabling faster fraud detection and decision-making

The bank also improved cost control. By reducing unnecessary duplication and routing queries more intelligently across hybrid environments, it reduced unnecessary data movement and reported significant savings in compute costs. Data access provisioning that previously took days could, in some cases, be completed within hours.

- Pan-African Bank Scales Governed Data Access on AWS, Cutting Integration Effort by 70–90% - May 11, 2026

- The New Calculus of Enterprise AI in an Unstable World: Why Wasteful Data Architectures Will Not Survive - March 31, 2026

- Harnessing Real-Time, Integrated Data to Accelerate ESG Initiatives - July 4, 2024