Allow me to introduce the (fictitious) Advanced Banking Corporation, or ABC for short. It may be advanced in name, but its IT systems are anything but. ABC will be our constant companion as we explore all three scenarios for data virtualization.

Alice Well, new CIO since last Monday, has heard nothing but bad news from or about IT. They are a roadblock to every new development and when they get started it takes twice as long and costs 50% more than planned. Existing systems are old and slow; decision makers must make do with yesterday’s data—if they’re lucky, and the nightly batch jobs all worked. If that wasn’t enough, ABC has just announced the acquisition of a small, nimble rival, who use a Hadoop-based data lake for decision support, and which, Alice knows, is largely incompatible with ABC’s legacy environment. She has been repeating her personal mantra “All is well, all will be well” almost non-stop since she arrived.

Alice’s first challenge has come from the very top. Unsurprisingly, it’s labeled “Urgent”.

“Why,” asks CEO, Bill Costly, “do agents in the new Call Center never have a customer’s most recent transactions to hand when taking a call?”

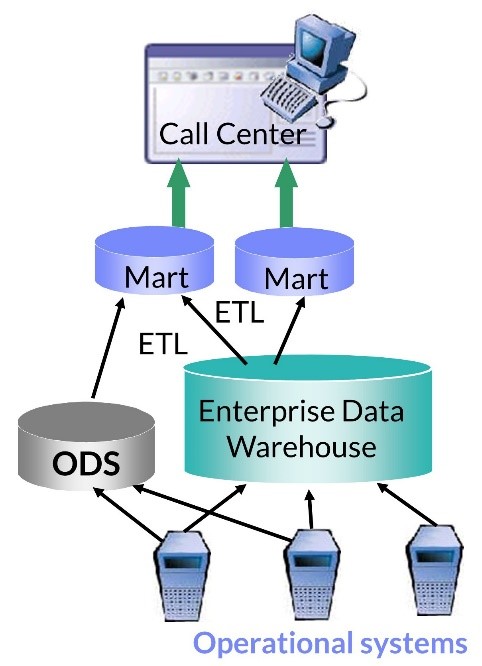

The answer, as Alice easily discovers, is that the system used by ABC’s Call Center was not designed from the ground up to deliver real-time data. As seen in the figure below, ABC’s data warehouse is a very traditional design, fed by batch ETL (extract, transform and load) tools from the operational checking, credit, and client management systems. The checking and credit extracts run nightly, but data from the client management system—a nasty mix of ancient code supplemented by spreadsheets—is only updated weekly, after manual checking by IT.

In the mid-1990s, ABC adopted best practice of the time and built an operational data store (ODS) in an attempt to improve the timeliness of data provided to business users. This ODS is a largely unmodified store of data from the checking and credit systems, loaded hourly. Including the client management system in the ODS proved beyond the skills and budget of IT. Because of the mismatch of timings between these data sources to the warehouse and ODS, call center agents struggle to answer all but the simplest of client enquiries.

Fortunately, Alice has recently attended an event organized by Denodo and a well-known consultant who showed and discussed the very pictures shown here!

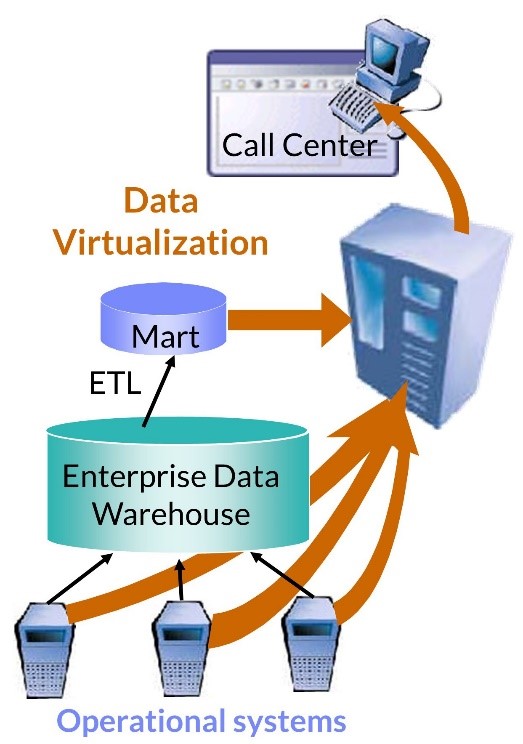

The new solution, based on data virtualization technology, is shown next.

Whenever an agent receives a client call, a query is sent from the call center app to the data virtualization (DV) server, which retrieves in real-time the historical, integrated data about the client from the data warehouse via a previously developed mart, today’s client transactions up-to-the-second from the operational checking and credit systems, as well as the most recent client status from the “spreadsheet enhancement” to client management system. The DV server joins together the data from the various systems and returns it to the agent.

Despite the simplicity and elegance of the solution, Alice will have to work hard to sell it to the BI manager, Mitch Adoo, whose nickname, “About Nothing” reflects his strictly traditional approach to data warehousing. Among Mitch’s objections are data consistency, network loading, and the impact on performance of the operational systems. Each argument has some validity, of course, but Alice has good answers, as well as holding a trump card, the CEO’s memo demanding urgent action.

It is true that more care is required when joining data in real-time than in batch where potential problems can be anticipated, and solutions applied in retrospect. Alice is aware of these issues but is making a very deliberate trade-off between immediacy of insight against guaranteed data consistency. Here, the trade-off is justified by the improved customer satisfaction of the majority of customers. The quality of the spreadsheet-based client management data is a major concern, but Alice is gambling that errors here will be of less concern to clients. And maybe she can use the savings from virtualization to offset some investment in the base system problems.

She is also injecting added agility into the IT department. We’ll return to this aspect in greater depth in a later article. In this case, the ability of the IT department to deliver real-time data to business users based entirely on existing systems has the important side benefit of proving that the BI department is willing to try something “new”!

Oddly enough, novelty in this case is largely a market illusion. Data virtualization is now a mature technology, having been around in different forms since the early 1990s. However, like Mitch, the market has been largely unaware of the technical advances the products have made. In relation to network load problems, Denodo, for example, uses multiple techniques to minimize the problem, including local caching of data and query optimization that takes the data volumes that need to be transferred between servers into account.

Regarding impact on operational systems’ performance, it certainly can be a problem if these systems are heavily loaded. However, it should be noted that the DV server is sending very specific queries to these sources, using fields that should normally be indexed, and getting results limited to a small number of rows. With such specific querying of operational sources, data virtualization in fact offers a far more efficient solution to joining current and historical data than the ODS approach.

And so, this is how Alice wins the first battle in her war to drag ABC’s IT systems into the 21st century. In my next post, we’ll see her set sail on that newly acquired data lake.

- The Data Warehouse is Dead, Long Live the Data Warehouse, Part II - November 24, 2022

- The Data Warehouse is Dead, Long Live the Data Warehouse, Part I - October 18, 2022

- Weaving Architectural Patterns III – Data Mesh - December 16, 2021